Key Takeaways

- Google removes reviews that violate one of 7 policy categories — fake engagement, off-topic, conflict of interest, restricted content, harassment, impersonation, and personal information.

- "Not a customer" is not a violation. Reviewers are not required to be paying customers — only to have had a real interaction with the business.

- Opinions are protected. A reviewer saying "the food was cold" is not a fake review. A reviewer claiming specific events that did not happen is.

- Fake 5-star reviews count too. Google and the FTC both treat purchased or incentivized positive reviews as fake engagement.

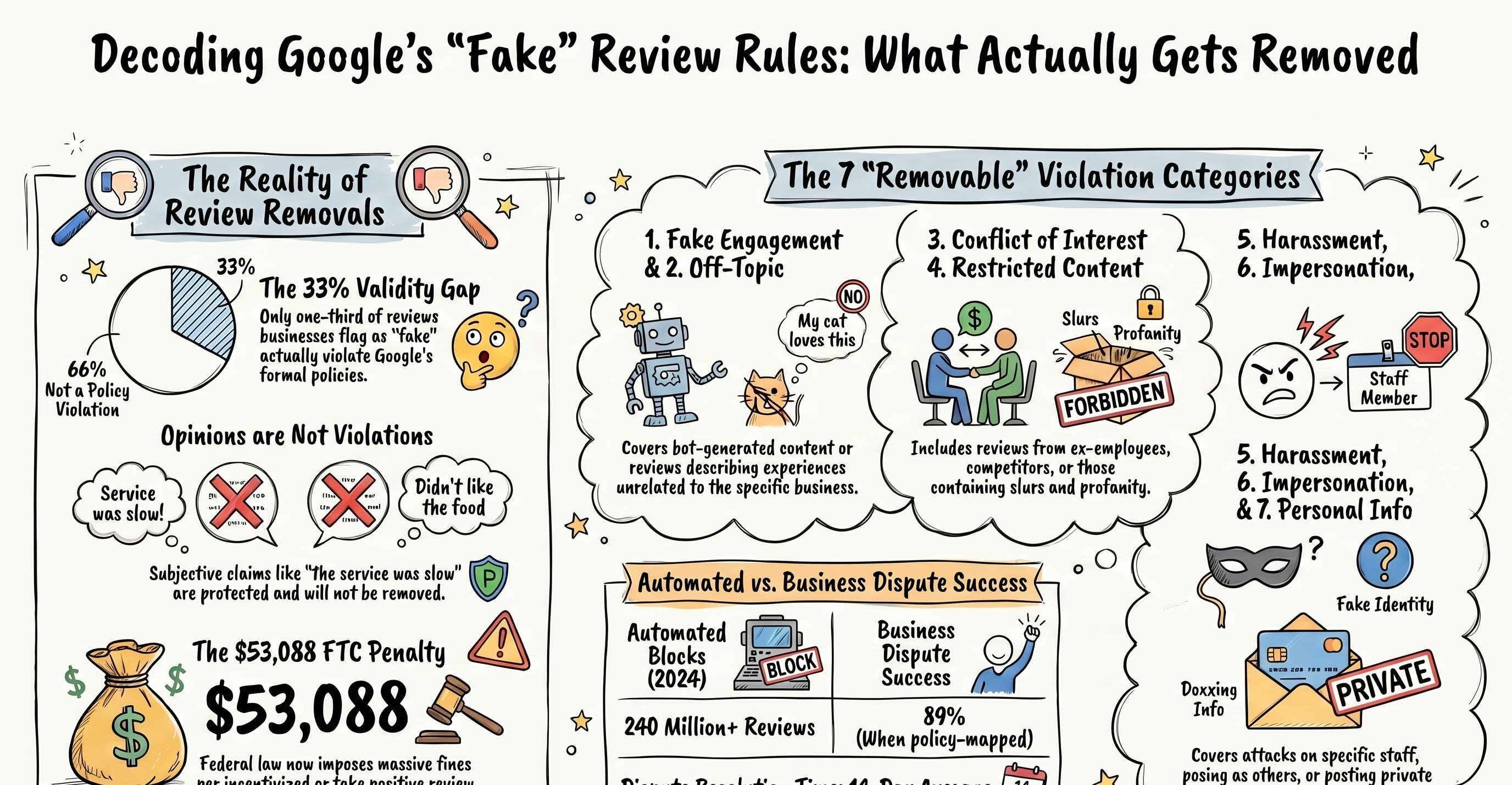

- Only ~33% of reviews businesses call "fake" actually violate Google's policies. Knowing which is which saves weeks of wasted dispute time.

Every business owner we talk to has their own definition of a fake Google review. Usually it's "a review I disagree with." Google's definition is narrower — and stricter. Across the 2,400+ disputes we've filed, the biggest single predictor of whether a removal will succeed is not how angry the business is, but whether the review actually fits one of Google's seven formal violation categories.

This is the practical map of which reviews Google removes, which it doesn't, and what the policy language actually says when your appeal lands on a human moderator's desk.

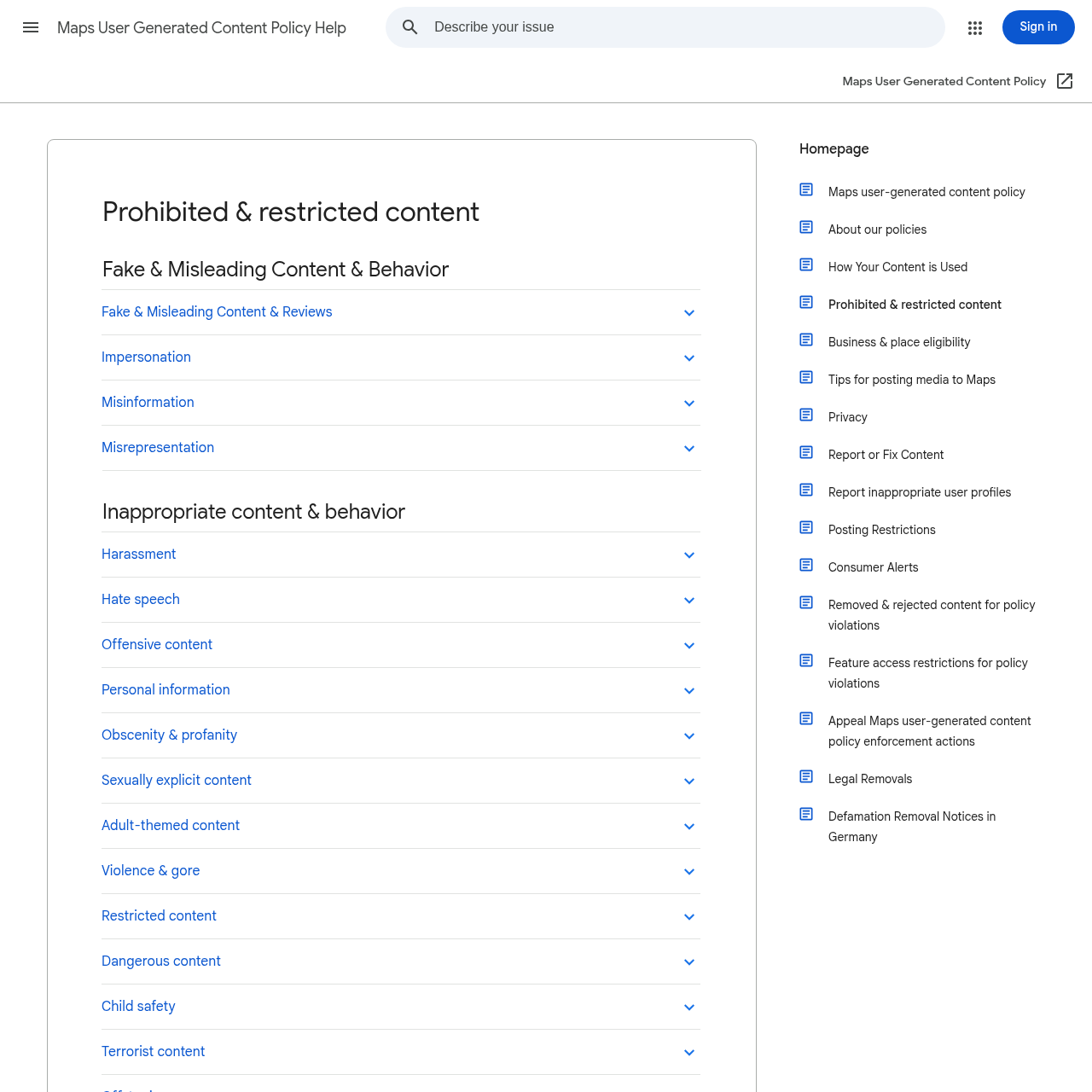

Google's formal definition of a fake review

Google's Prohibited and Restricted Content policy defines fake engagement as content that is "not based on a real experience" or "does not accurately represent the location, product, or service." That is the legal standard. Everything else — the seven categories below, the evidence tiers, the case-by-case judgment calls — flows from that single definition.

The practical test: would an unbiased third party, reading this review alongside the available evidence, conclude the reviewer had no real interaction with this business? If yes, you have a fake-engagement case. If the reviewer clearly interacted with the business but described it negatively, you do not.

The 7 policy categories Google actually enforces

| violation category | policy description | typical evidence needed | difficulty of removal | Source |

|---|---|---|---|---|

| Personal information | Reviews containing full names, phone numbers, physical addresses, or other doxxing-level details. | Presence of private personal data within the review text. | Aggressive removal/High priority | [1] |

| Restricted content | Content containing profanity, slurs, sexually explicit language, and hate speech. | Automated filters; manual flag of prohibited language. | Fastest (often 24–72 hours) | [1] |

| Off-topic | Reviews that do not describe an experience with the business, such as reviews of neighboring businesses or political complaints. | Content analysis of the review text itself. | Faster than most | [1] |

| Fake engagement | Content created by bots, AI, coordinated networks, or individuals without a genuine interaction with the business. | Pattern evidence: sudden bursts of reviews, reviewer profiles with shared geographic/temporal signatures, or generic phrasing. | Not in source | [1] |

| Conflict of interest | Reviews from current or former employees, competitors, or anyone with an active dispute against the business. | Documentary evidence: LinkedIn profiles, employment records, termination paperwork, or court filings. | Not in source | [1] |

| Harassment | Personal attacks, threats, bullying directed at specific staff, or targeted discrimination. | Evidence of naming specific employees and accusing them of unproven criminal behavior. | Not in source | [1] |

| Impersonation | Reviewers posing as employees, customers, or other affiliated parties they are not. | Identification of unambiguous policy violation regarding identity. | Not in source | [1] |

Every successful review removal we file cites at least one of these seven categories. Some reviews fit multiple — an ex-employee review that uses profanity is both a conflict-of-interest violation and a restricted-content violation. Appeals that cite multiple violations consistently outperform single-category appeals.

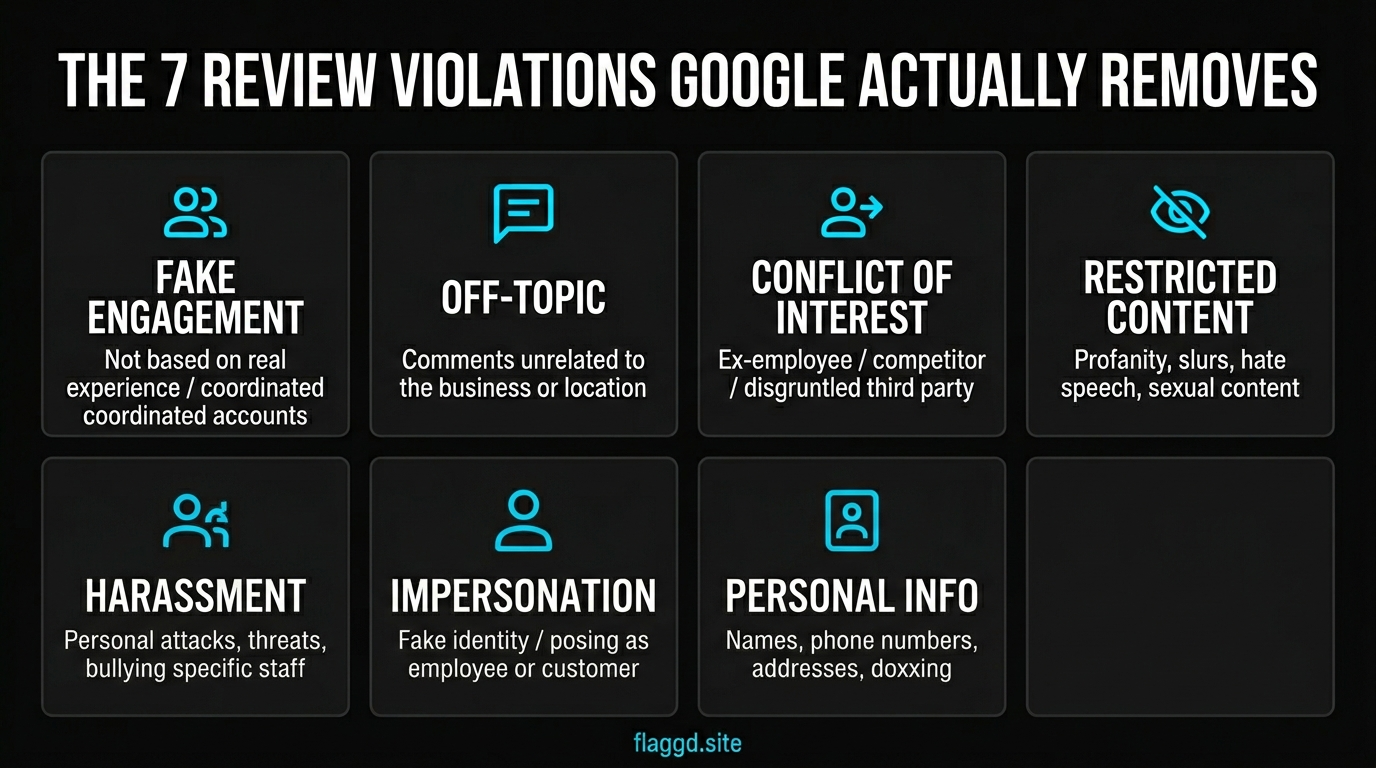

1. Fake engagement

The headline category. Content created by bots, AI, coordinated networks, or anyone who did not have a genuine interaction with the business. Proof almost always comes from pattern evidence — a sudden burst of 1-star reviews against a long 5-star history, reviewer profiles that share geographic or temporal signatures, or reviews so generic they could apply to any business.

2. Off-topic

Reviews that do not describe an experience with the business being reviewed. Classic examples: a review of your restaurant that is actually about the parking garage next door, or a review that is really a political complaint about the neighborhood. Off-topic is usually clear from the review text, which makes these removals faster than most.

3. Conflict of interest

Reviews from current or former employees, reviews from competitors, and reviews from anyone with an active dispute against the business. This is where documentary evidence matters most. LinkedIn profiles that show the reviewer works at a competing firm, employment records for ex-staff, court filings for opposing litigants — each of these converts a weak "this feels personal" dispute into a strong provable case.

4. Restricted content

Profanity, slurs, sexually explicit language, and hate speech. Google's automated filters handle most of this before a human ever sees it — which is why profanity-driven removals are often the fastest, clearing inside 24–72 hours. For a deeper breakdown of what the automated pipeline catches, see our Google review removal timeline breakdown.

5. Harassment

Personal attacks, threats, bullying directed at specific named staff, and targeted discrimination. A review that names a specific employee and accuses them of criminal behavior without evidence is often removable under harassment even when the fake-engagement angle is harder to prove.

6. Impersonation

Reviewers posing as employees, customers, or other affiliated parties they are not. Impersonation is rare but high-value — these reviews are almost always removed when identified, because the policy violation is unambiguous.

7. Personal information

Reviews containing full names, phone numbers, physical addresses, or other doxxing-level personal details. Google's privacy policy treats these aggressively. Exception: if the business name itself contains a personal name ("Smith & Partners Law"), the partner's surname is considered public-facing branding and is not treated as personal information.

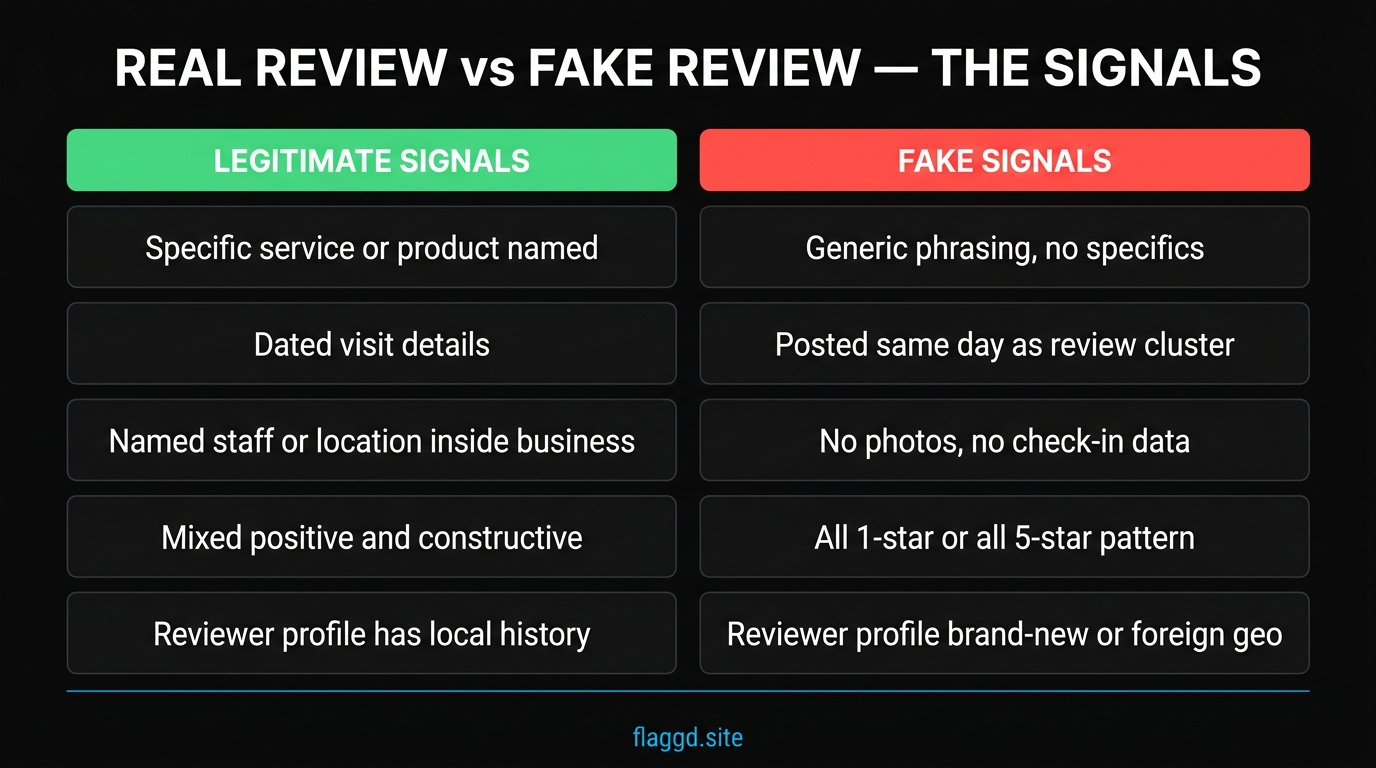

Real review signals vs. fake review signals

Before you file a dispute, pattern-match the review against the two signal sets below. If most of the signals land in the left column, you probably have a legitimate negative — dispute is unlikely to succeed. If most land in the right column, you likely have a fake-engagement case that will hold up under appeal.

No single signal is decisive. A new reviewer account is not proof of fakery — everyone's first review has to happen somewhere. But three or four stacked signals — new account, no local history, same-day posting cluster, generic phrasing, all-1-star voting pattern — is the combination that usually clears moderation.

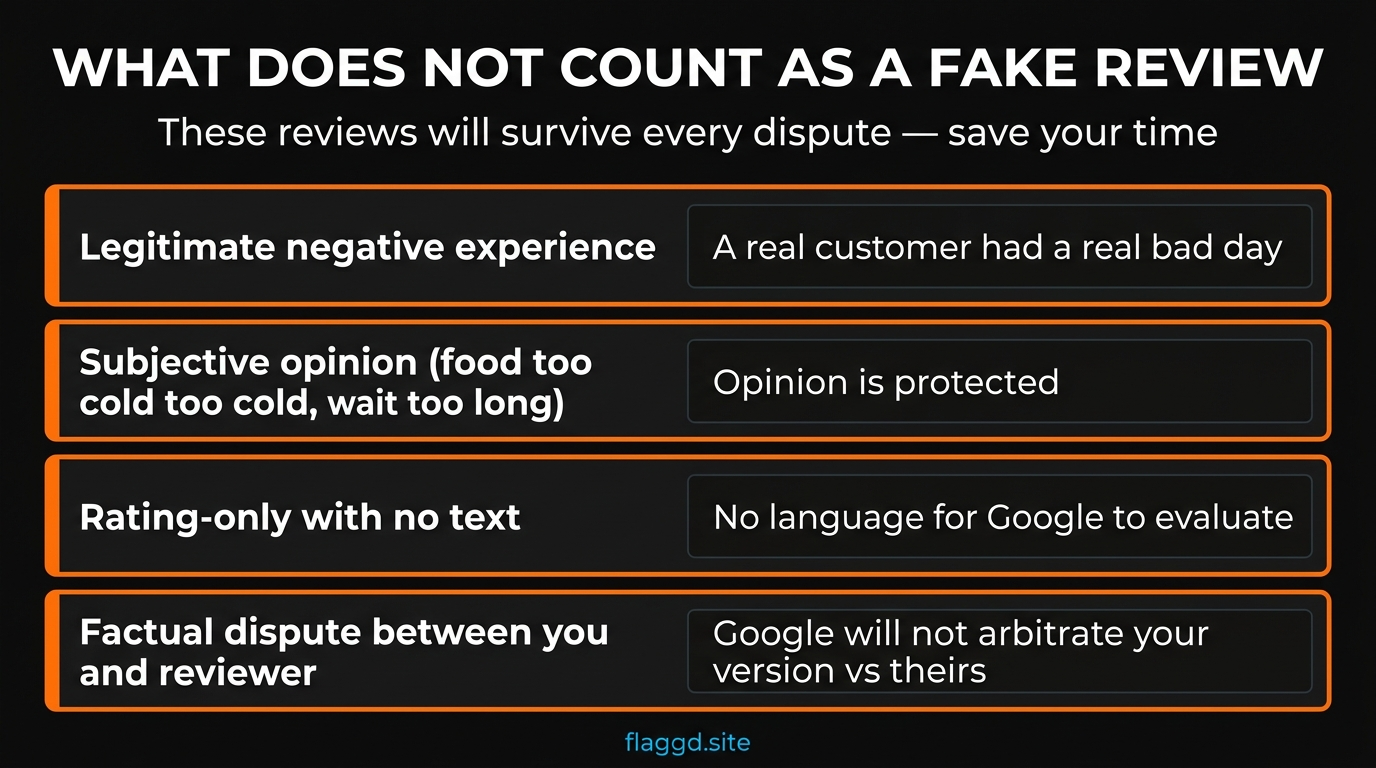

What does NOT count as a fake review

Flagging these four categories as fake is the single fastest way to burn your dispute credibility and waste two weeks of calendar time. Save your appeals for reviews that will actually come down.

- A legitimate negative experience. A real customer had a real bad day. Your fix here is operational — respond publicly, address the root cause, and build up counterbalancing positive reviews over time.

- A subjective opinion. "The food was cold," "the wait was long," "the lawyer wasn't responsive" — these are protected opinion under Google's guidelines, even when you disagree. They do not violate any policy.

- A rating-only review with no text. A single 1-star with no words is almost impossible to remove. There is no language for Google's algorithm or a human moderator to evaluate. Unless you can prove coordinated fake engagement (a burst of rating-only 1-stars from new accounts), set this aside.

- A factual dispute between you and the reviewer. Google explicitly states it does not arbitrate disputes over facts between business owners and reviewers. If your argument is "this never happened" without third-party evidence, you will not prevail.

Fake positive reviews — the category businesses miss

Almost every conversation about fake reviews focuses on negatives. But Google's fake-engagement policy applies to positive reviews the same way — and in 2026, the stakes are significantly higher for positives than for negatives.

The FTC's Consumer Reviews Rule (16 CFR Part 465), which took effect in October 2024, makes purchased, incentivized, or undisclosed insider-generated positive reviews illegal under US federal law. Penalties run up to $53,088 per fake review, with each individual review counted as a separate violation. In December 2025 the FTC sent warning letters to the first wave of businesses under this rule. We cover the full breakdown in the FTC fake review rule 2026 guide.

What this means practically: if a competitor is inflating their own profile with fake 5-stars, flagging those under fake engagement is a legitimate policy-based dispute, not a petty complaint. And if you have ever bought, incentivized, or had employees post reviews without disclosure on your own profile, clean that up before an enforcement round lands.

How Google actually detects fake reviews

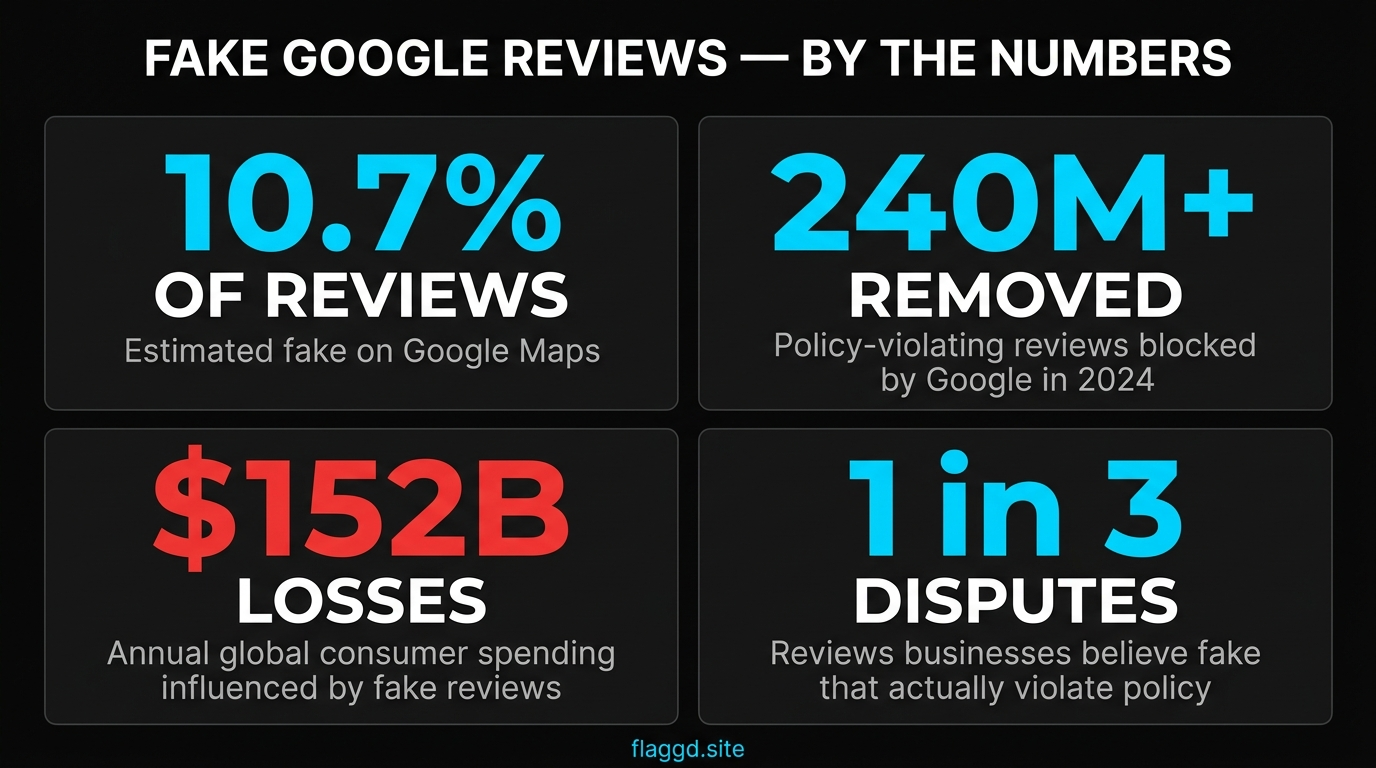

Google's automated detection has improved dramatically. In 2024 alone, Google removed or blocked more than 240 million policy-violating reviews — the vast majority through automated filters before they were ever flagged by a business. The system looks at:

- Text signals. Repetition across accounts, known spam phrases, language that overlaps with previously removed content, and incoherent or generic phrasing.

- Account signals. Reviewer profile age, review frequency, geographic distribution of reviews, and cross-platform identity consistency.

- Temporal signals. Burst patterns (five 1-stars in two hours on a profile that usually gets two reviews a month), coordinated timing across accounts, and activity from known review-farm infrastructure.

- Network signals. Reviewers who have previously reviewed the same set of businesses in the same industry — a common fingerprint of coordinated reputation attacks.

The algorithm is strict enough that it sometimes removes legitimate reviews too — which is how entire small businesses lost review counts during the February–March 2026 mass-removal sweep. Automated accuracy is not the same as human judgment, and the appeal process exists precisely because both directions of error are real.

Frequently asked questions

One last thing: the difference between a removal that succeeds and one that fails is almost never about how unfair the review feels. It's about how cleanly the review maps to one of these seven categories, and how much documentary evidence you bring. Build the case on policy, not emotion, and your success rate compounds.